Unleash the full potential of your website and elevate its online presence with our comprehensive online courses.

Pillar | Description |

Transparency | Disclosing when content is AI-generated (e.g., watermarking) and explaining how models make decisions. |

Accountability | Establishing who is responsible when an AI system causes harm or provides false information. |

Inclusive Design | Using diverse datasets and involving a wide range of stakeholders during the development process. |

Human-in-the-Loop | Ensuring critical decisions (medical, legal, financial) are reviewed by humans rather than fully automated. |

1 Year

1 Year

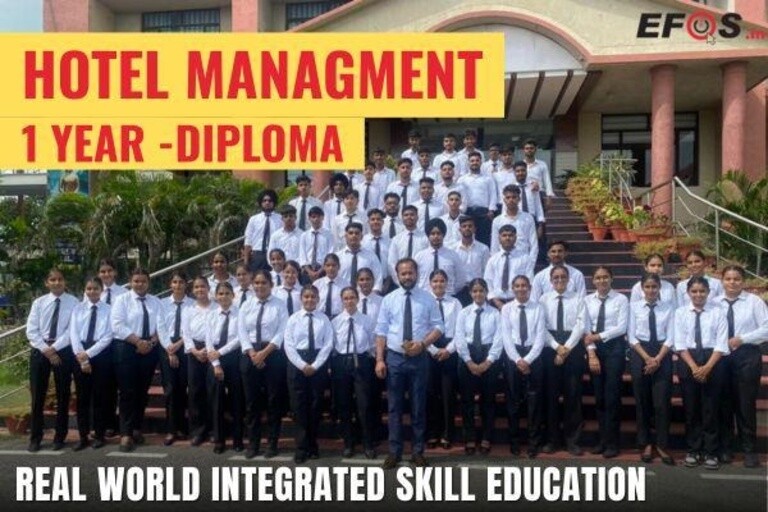

Hotel Management Diploma (RISE Program)- Learn & Earn Mode RISE: Real-world Integrated Skills for...

6 Weeks

6 Weeks

A Career Counsellor guides individuals in choosing the right career path based on their skills, inte...

6 Weeks

6 Weeks

Food Safety ensures that food is handled, prepared, stored, and served in a way that prevents contam...

6 Weeks

6 Weeks

Driving inclusive growth by connecting rural communities with education, skill development, and empl...

6 Weeks

6 Weeks

Driving growth by identifying new business opportunities, building strategic partnerships, and expan...

6 Weeks

6 Weeks

Focuses on transforming data into actionable insights to drive business decisions. It combines stati...