Unleash the full potential of your website and elevate its online presence with our comprehensive online courses.

AI hallucination is a phenomenon where, in a large language model (LLM) often a generative AIchatbot or computer vision tool, perceives patterns or objects that are nonexistent or imperceptible to human observers, creating outputs that are nonsensical or altogether inaccurate.

Impact Area | Consequences of Hallucination |

Legal/Compliance | Fabricated case citations can lead to sanctions, fines, and disbarment (e.g., the Mata v. Avianca case). |

Healthcare | Incorrect medical advice or non-existent treatment protocols can directly endanger patient lives. |

Information Integrity | Hallucinations fuel the spread of misinformation, eroding public trust in digital institutions and democratic discourse. |

Cybersecurity | AI may hallucinate threat vulnerabilities or suggest incorrect remediation steps, leaving systems exposed. |

Responsible AI Rule:Never publish or act upon AI-generated content in high-stakes environments (legal, medical, financial) without manual verification by a subject matter expert.

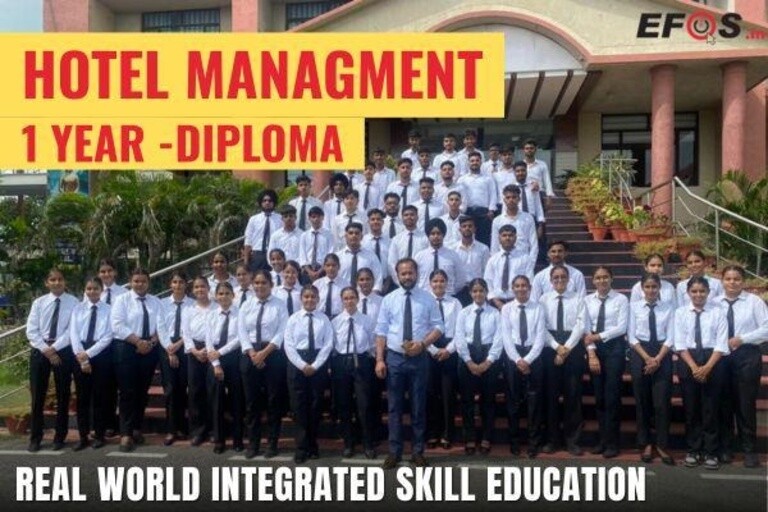

1 Year

1 Year

Hotel Management Diploma (RISE Program)- Learn & Earn Mode RISE: Real-world Integrated Skills for...

6 Weeks

6 Weeks

A Career Counsellor guides individuals in choosing the right career path based on their skills, inte...

6 Weeks

6 Weeks

Food Safety ensures that food is handled, prepared, stored, and served in a way that prevents contam...

6 Weeks

6 Weeks

Driving inclusive growth by connecting rural communities with education, skill development, and empl...

6 Weeks

6 Weeks

Driving growth by identifying new business opportunities, building strategic partnerships, and expan...

6 Weeks

6 Weeks

Focuses on transforming data into actionable insights to drive business decisions. It combines stati...